Or rather, that is always the case with the Fourier convention we are using here. We compute the variances and the squared norms with v0 = Integrate^2, ]įrom here we can calculate that the ratio of the left side to the right side of the uncertainty principle is 5/18, which is larger than the lower bound C = 1/4.īy the way, it’s not a coincidence that h and its Fourier transform have the same norm. We will evaluate both sides of the Fourier uncertainty principle with h := 1/(x^4 + 1)Īnd its Fourier transform g := FourierTransform, x, w] If f is proportional to the density of a normal distribution with standard deviation σ, then its Fourier transform is proportional to the density of a normal distribution with standard deviation 1/σ, if you use the radian convention described in. And in this case the uncertainty is easy to interpret. The inequality is exact when f is proportional to a Gaussian probability density. This form is scale invariant: if we multiply f by a constant, the numerators and denominators are multiplied by that constant squared.

Rather than look at the variances per se, we look at the variances relative to the size of f. Perhaps a better way to write the inequality isįor non-zero f. Here || f|| 2² is the integral of f², the square of the L² norm. Where the constant C depends on your convention for defining the Fourier transform. The Fourier uncertainty principle is the inequality It is not limited to the case of | f( x)|² being a probability density, i.e. The Fourier variance defined above applies to any f for which the integral converges. In other words, we multiply f by its complex conjugate, not by itself. In quantum mechanics applications, however, f is complex-valued and | f( x)|² is a probability density. Since we said f is a real-valued function, we could leave out the absolute value signs and speak of f( x)² being a probability density. The twist alluded to above is that f is not a probability density, but | f( x)|² is.

This is the variance of a random variable with mean 0 and probability density | f( x)|². If f is a real-valued function of a real variable, its variance is defined to be Variance in Fourier analysis is related to variance in probability, but there’s a twist. When you read “variance” above you might immediately thing of variance as in the variance of a random variable. The Fourier uncertainty principle gives a lower bound on the product of the variance of a function and the variance of its Fourier transform.

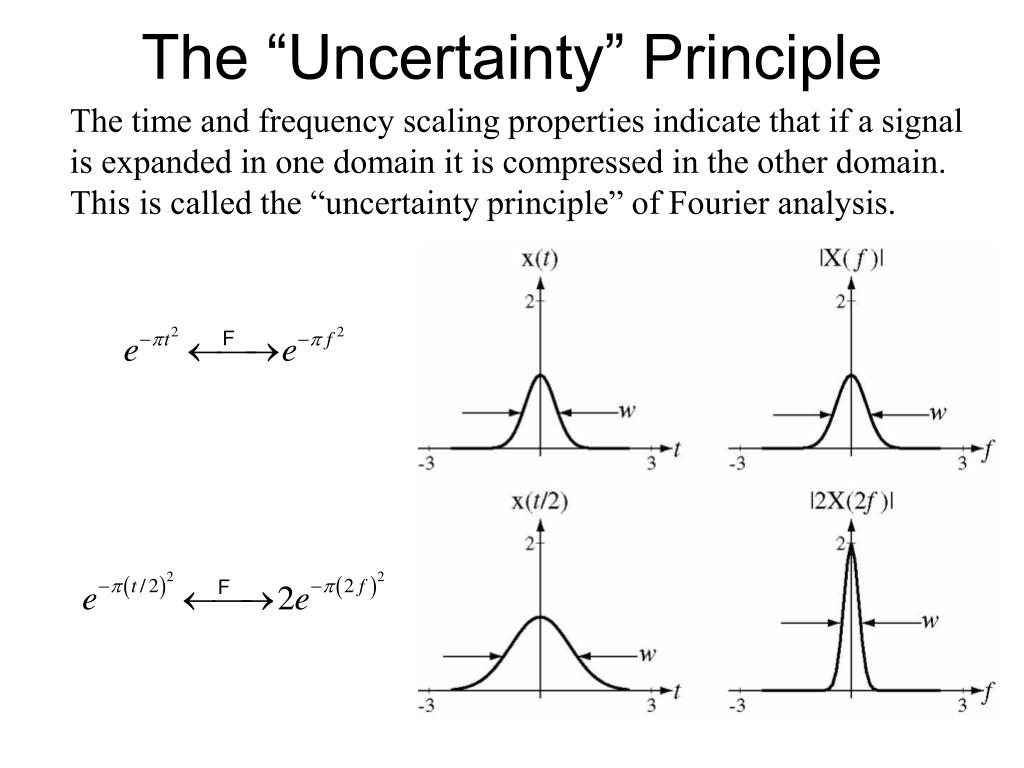

This post will look at the form closest to the physical uncertainty principle of Heisenberg, measuring the uncertainty of a function in terms of its variance. There are many ways to quantify how localized or spread out a function is, and corresponding uncertainty theorems. The more concentrated a signal is in the time domain, the more spread out it is in the frequency domain. There is a closely related principle in Fourier analysis that says a function and its Fourier transform cannot both be localized. The product of the uncertainties in the two quantities has a lower bound. Heisenberg’s uncertainty principle says there is a limit to how well you can know both the position and momentum of anything at the same time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed